AI for Anti-Money Laundering

Graph Deep Learning at MIT-IBM

In my work at the MIT-IBM Watson AI Lab, I am collaborating with a special group of people inspired to harness the powers of deep learning and high performance computing to fight money laundering. Anti-money laundering (AML) is a complex problem and we don’t have delusions of being superheroes who save the day, but we believe AI can play a powerful role and we’re here to do our part as researchers. We’re especially excited about the potential of Graph Convolutional Networks (GCN), an emergent class of methods capable of capturing relational information, which is important given complex layering and obfuscation schemes utilized by sophisticated criminal networks. The purpose of this page is to share our motivation and progress as we pursue this work, with hopes of inviting feedback and inspiring others to join us in the cause.

One year in, we can summarize our work-to-date as follows:

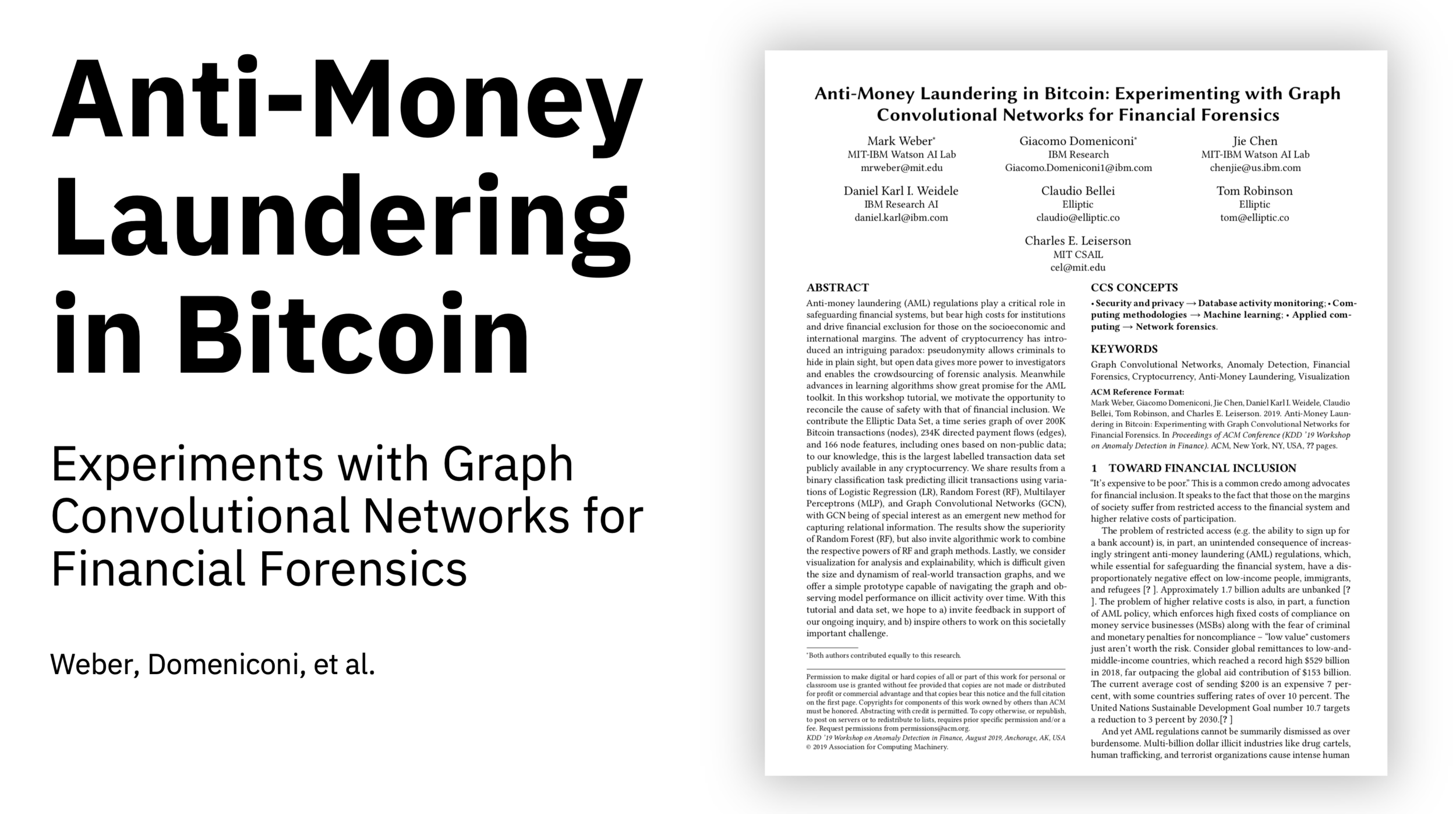

Presented at the KDD 2019 Workshop on Anomaly Detection in Finance

EvolveGCN: Evolving Graph Convolutional Networks for Dynamic Graphs

Scalable Graph Learning for Anti-Money Laundering: A First Look

Presented at the NeurIPS 2018 AI in Finance Workshop

AML as a social impact priority

``It's expensive to be poor.'' This is a common credo among advocates for financial inclusion. It speaks to the fact that those on the margins of society suffer from restricted access to the financial system and higher relative costs of participation.

The problem of restricted access (e.g. the ability to sign up for a bank account) is, in part, an unintended consequence of increasingly stringent anti-money laundering (AML) regulations, which, while essential for safeguarding the financial system, have a disproportionately negative effect on low-income people, immigrants, and refugees (World Bank 2012). Approximately 1.7 billion adults are unbanked (World Bank 2017). The problem of higher relative costs is also, in part, a function of AML policy, which enforces high fixed costs of compliance on money service businesses (MSBs) along with the fear of criminal and monetary penalties for noncompliance -- “low value” customers just aren't worth the risk. Consider global remittances to low-and-middle-income countries, which reached a record high $529 billion in 2018, far outpacing the global aid contribution of $153 billion. The current average cost of sending $200 is an expensive 7 percent, with some countries suffering rates of over 10 percent. The United Nations Sustainable Development Goal number 10.7 targets a reduction to 3 percent by 2030. (WorldBank2019)

And yet AML regulations cannot be summarily dismissed as over burdensome. Multi-billion dollar illicit industries like drug cartels, human trafficking, and terrorist organizations cause intense human suffering around the world. The Mexican drug cartels have murdered 150,000 people since 2006; upwards of 700,000 people per year are “exported” in a human trafficking industry enslaving an estimated 40 million people. These nefarious industries rely on sophisticated money laundering schemes to operate.

The recent 1Malaysia Development Berhad (1MDB) money laundering scandal robbed the Malaysian people of over $11 billion in taxpayer funds earmarked for the nation's development, with mega-fines and criminal indictments for Goldman Sachs among others implicated in the wrongdoing. The even more recent Danske Bank money laundering scandal in Estonia, which served as a hub for an estimated $200 billion in illicit money flows from Russia and Azerbaijan, similarly extracted an incalculable toll on innocent citizens of these countries and served implicated institutions like Danske Bank and Deutsche Bank with billions of dollars in losses.

The bad guys are winning

Global costs of AML compliance run in the tens of billions of dollars and have been growing at about 15% per year since 2004. Despite this, we are woefully ineffective at preventing money laundering. Europol estimates only 1% of illicit funds are confiscated (Europol 2017). To understand why, we consider both the technical and behavioral challenges, as well as the current methods for addressing them.

Technically, we have a needle-in-a-haystack problem of entity classification and hidden pattern discovery in massive, dynamic, high dimensional, time-series transaction data sets with high noise-to-signal ratios, combinatorial complexity, and non-linearity. Data sets are often fragmented, inaccurate, incomplete, and/or inconsistent, within as well as across organizations. Synthesizing information from multimodal data streams is difficult to automate, and so it falls to resource-constrained human analysts. Behaviorally, the heavy hand of compliance (i.e. the cost asymmetry of a false positive versus a false negative) motivates over-reporting, leading to a secondary needle-in-a-haystack problem for resource-constrained government law enforcement.

A recent Reuters survey of c-suite executives at 2,373 large global organizations provides color on how these challenges play out for stakeholders. An estimated 5% of reviewed transactions are filed as Suspicious Activities Reports (SARs) and only 10% of those receive a meaningful investigation by law enforcement. Thus 0.5% of criminal activity alerts lead to action. Each organization transacts with thousands of third-party vendors and millions of clients, with finance and insurance companies comprising the upper fractiles of the distribution. Internal monitoring is just as important; respondents estimated 58\% of financial crimes involving human trafficking were internal to their own organizations. (Reuters 2018)

Despite tremendous resources dedicated to anti-money laundering (AML) only a tiny fraction of illicit activity is prevented. This is a sign that we don’t need more resources; we need better tools.

AI for AML: Graph Convolutional Networks

We've seen deep learning do remarkable things on Euclidean data - audio, images, video. Not so much yet on graph data, until very recently. Graph data is structurally different; it's all about relationships between data. Think social networks, gene expression networks, knowledge graphs, you name it - graphs are all around us. In finance we can think about trading, hedging, and asset management, supply chain finance and optimization, lending and securitization. Each of these can use graphs to capture relationships and interactions between different types of entities, often with a time series component, and often in a dynamic setting. The problem is, deep learning on graph data is extremely difficult computationally due to the combinatorial complexity and nonlinearity inherent to graphs of any meaningful size and density. And it's precisely the information hidden in that complexity that makes graph data so interesting and important.

Recently we've seen a rapid and exciting acceleration of work on graph convolutional networks, or GCN's, with special attention to the question of scaling (Kipf and Welling). With GCNs, we begin with certain attributes to describe the nodes and edges, and we use convolutions over the graph to pull out the hidden properties and patterns. This is called node embedding and the objective is to achieve a better vector representation of each entity. In laymens’ terms, you can think of each node asking the age-old question, “Who am I?” It’s really an existential question with infinite complexity, but we need a vector of finite length. So we have to bound the model or it’s going to take a prohibitively long time, and find a way do so without sacrificing accuracy. This is the challenge of scalability.

At ICLR 2108, my colleagues Jie Chen and Tengfei Ma presented a new method called FastGCN. And if I may sing their praises for a moment, this work was a big step forward on scalability. FastGCN was able to beat previous speed benchmarks by two orders of magnitude. It does so by using a variant method for importance sampling and by performing integral transformations in node embedding to account for node inter-dependency.

Building on FastGCN, we're now exploring how we can capture the power of graph deep learning for anti-money laundering. We invite you to review our work-to-date, provide your feedback, and join us in this effort.